Automatic deployment updates with Flux

Learn how to install Flux, deploy an application with it, and configure automatic updates whenever a new version is published.

Flux, From Installation to Automatically Updating Deployment in 30 Minutes

What Are We Doing?

In this article, we set up Flux on a Kubernetes cluster, deploy an application via Flux, and then configure it to automatically update the used container image whenever there is a new version available.

We deploy this sample hello world website: https://github.com/mstruebing/static-sample

which is set up to automatically build and push a new docker image tagged as the current timestamp to DockerHub whenever there is a new commit.

Look into the index.html, Dockerfile and .github/workflows/ci.yaml files to get a sense of what the application is doing.

The full Flux repository can be found here: https://github.com/mstruebing/flux-demo where every step is a single commit.

What Is Flux and the Advantages of GitOps

I could try to explain flux in depth here, but I will never be as good as the official documentation. So I will keep it short and if you want to know more I recommend reading the following pages:

Let’s explain a couple of terms first:

- Infrastructure as code (IaC): This is a way to describe your infrastructure in a declarative way with code.

- GitOps: A way to automatically update your infrastructure to match the definition of your infrastructure as code from a git repository

Now we can say that Flux is a GitOps solution for Kubernetes. That means Flux manages to apply your IaC-defined infrastructure from a Git repository to your Kubernetes cluster in an automated way.

There are two different approaches to GitOps, push based and pull-based; we are using the pull-based approach.

Push-based means that the update is typically triggered by a CI/CD pipeline. Pull-based means that there are running services which monitor your git repository (or for example image registry) and act on changes when they occur. These resources most likely are polled on a specified interval.

All of this has a couple of advantages:

- You can deploy fast, easily and often by simply pushing to a repository

- You can run a

git revertif you messed up your environment and everything is like it was before - This means you can easily roll back to every state of your application or infrastructure

- Not everyone needs access to the actual infrastructure environment, access to the git repository is enough to manage the infrastructure

- Self-documenting infrastructure: you do not need to ssh into a server and look around running services or explore all resources on a Kubernetes cluster

- Easy to create a demo environment by replicating the repository or creating a second deploy target

Installing Flux

We need a Kubernetes cluster up and running, the amount of nodes doesn’t matter. We need the Flux CLI installed on our local system you can have a look at how to install it here: https://fluxcd.io/flux/installation/

If you have installed the Flux CLI and have configured kubectl to be connected with the right cluster you can run flux check --pre to make sure your cluster is ready to get flux installed.

We need a personal access token for GitHub, which you can create here: https://github.com/settings/tokens. Generate a new token and check everything under repo.

Export your GitHub user name and your newly created token:

Tip: When you add a space in front of any shell command, this command is not available in your shell history.

- Install flux with this command, I use

clusters/labhere as my path because that’s what my Kubernetes cluster is called and that way it’s easier to set up this repository for multiple clusters.

This installs flux on your cluster, creates the git repository in case it doesn’t exist yet, adds a deploy key to the repository and pushes all the needed resources into the repository.

The output should look similar to this:

| |

Now, if we look into the newly created flux-system namespace we can see that

there are a couple of resources created:

| |

These are needed and come with the default installation of flux, we will later add other controllers too.

We also have a new resource type gitrepositories.source.toolkit.fluxcd.io where we can see

our repository:

And now we have successfully installed flux on our cluster.

Deploying Our Sample Application

To deploy our sample application we need to create a kustomization to tell flux where to find our application definition in git.

First, start with cloning the repository flux created on our local machine. We will execute the next steps from the root of the repository.

This will create the following kustomization:

| |

This means: hey, look, this is a kustomization which is called static-sample and lives

in our flux repository in the path ./clusters/lab/kustomize, please check this every 5 minutes

and prune every resource which is no longer in the repository (prune: true).

Let’s create this kustomize directory and add a namespace first before we add our other resources:

| |

If we now push these changes up, we should shortly see a namespace popping up on our

Kubernetes cluster. You can use kubectl get ns --watch until it’s there.

Now let’s add all our other resources to make this application available on the cluster.

| |

| |

As this cluster is running on my local network I will edit my /etc/hosts file

to map the IP address of the control plane node to the host I’ve added as the ingress rule.

If we now push this up and monitor our Kubernetes resources we should see these resources popping up on the cluster, you can monitor it with:

| |

When the resources are there the output should look similar to this:

| |

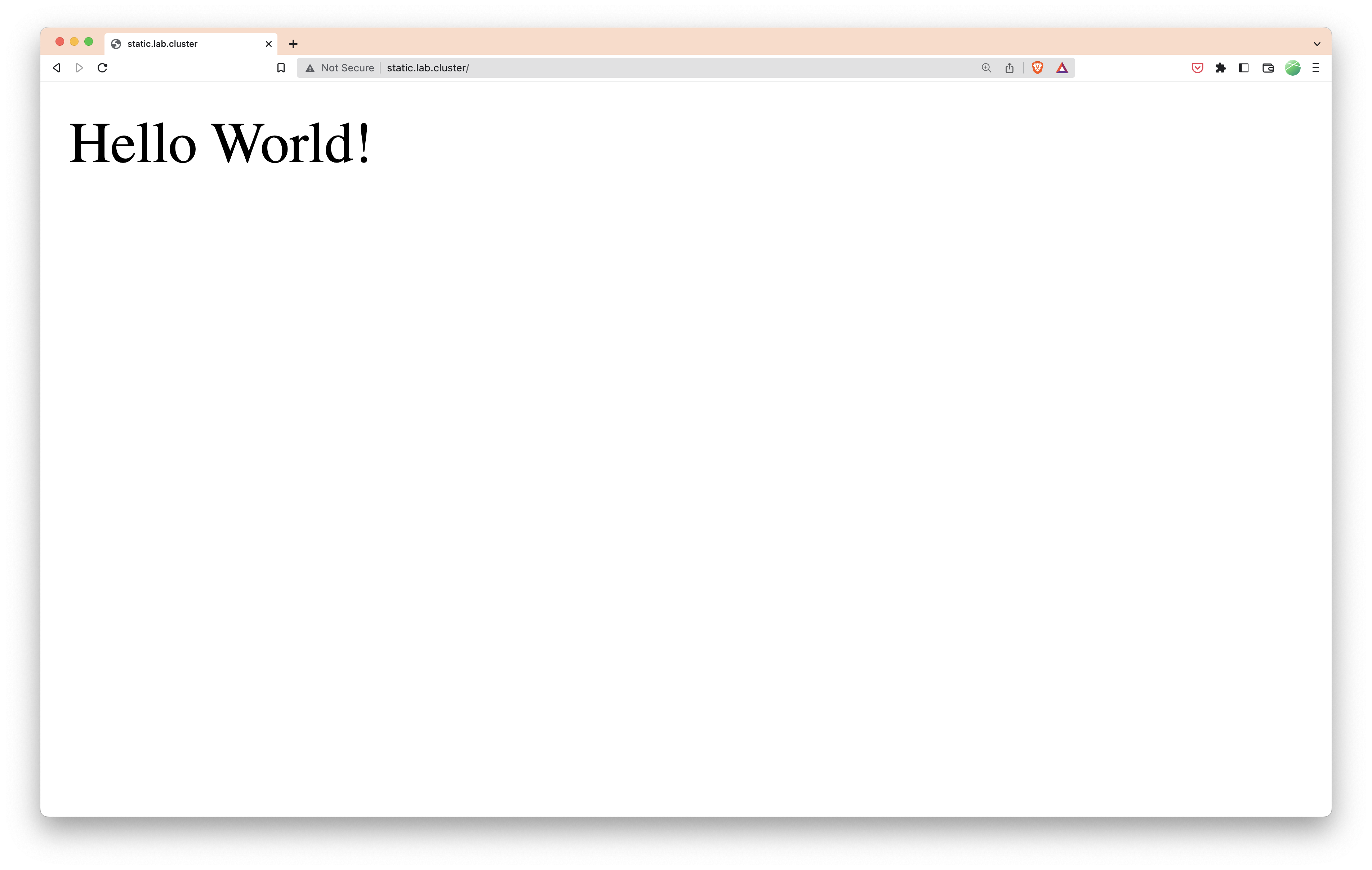

When the resources are there we can go to http://static.lab.cluster/ and should see our website.

That’s great, and whenever we push to our repository flux takes care of updating the resources on the cluster. Whenever we change the number of replicas, the image tag, or whatever, this will be automatically handled and we can add every Kubernetes resource needed there, we are not limited to what we did right now.

If you are following along, try to change the number of replicas and see what happens.

Automatic Image Update

What we did works, but it has one disadvantage if we want full automation. Whenever we change something in our application we manually need to update the image tag on our deployment.

While this could be something you would want to do depending on your use case, I do not in this example.

In order to do this we need to install flux with some additional components: the image reflector and image automation controllers.

Let’s uninstall flux and install it again with the needed components, don’t worry, as we have everything set up in our repository it is no effort to get into this state again.

If we look at the pods running in our flux-system-namespace we can see the

controllers for our special components running:

| |

We need to add an image repository which defines which repository to use for the image, an image policy which defines which image tags to use and how to order them, and an image update automation which defines how we want to update our deployment.

Make sure to pull the repository first before following along as flux committed some files to the repository with the latest flux installation.

We need to adjust the image policy to the following:

| |

This means that we want to extract the timestamp from the image and sort them ascending to always have the newest image available. You can find a lot of other examples in the flux documentation: [https://fluxcd.io/docs/components/image/imagepolicies/#examples].

We need to tell our deployment that it should use the image from the freshly created ImagePolicy:

| |

We have updated the image to contain the comment # {"$imagepolicy": "static-sample:static-sample"} which needs to match the namespace and name of the ImagePolicy.

The last part is to create our image update automation with the following command:

| |

We can now look at what flux figured out:

| |

We can see flux found some tags in the repository and we can see to which tag the latest version is resolved.

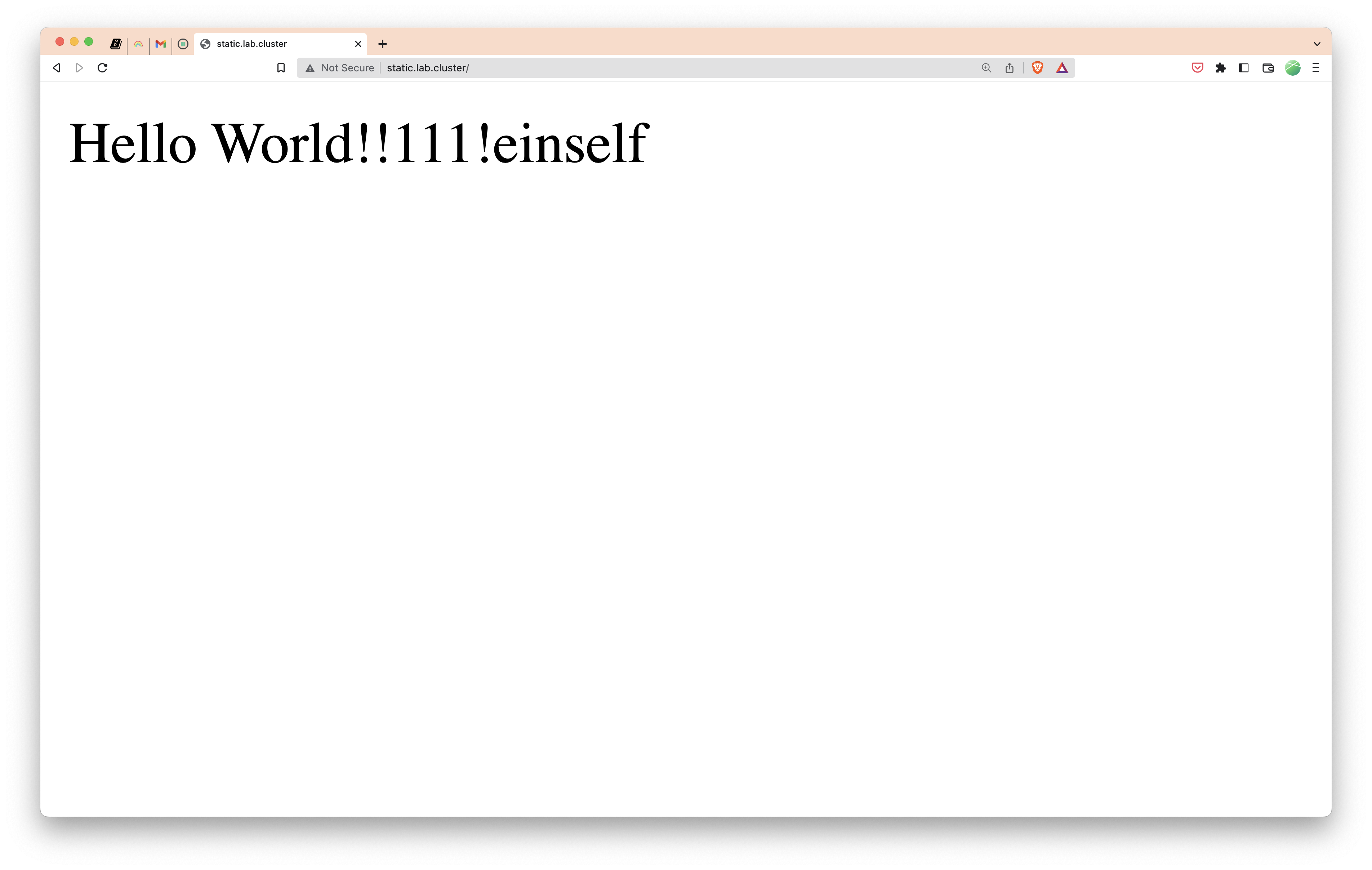

Now, let’s push an update to our sample application and change the Hello World!

We need to wait for the GitHub Action to build and push the image, we can monitor the flux updates in the meantime with:

| |

When it will be ready you will see that the image tag changed, the amount of tags found and the last commit is changed.

If we now go back to our website we can see our changes there:

That’s it, you can do a lot more with Flux and what I did may not be the optimal way of doing this. But it works for me and I deploy some applications on a real Kubernetes cluster like this without issues.

The Flux documentation contains all of this information but it could be hard to find your way around with no experience, so I decided to write that blog post and hopefully inspire some people to try it out.